|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

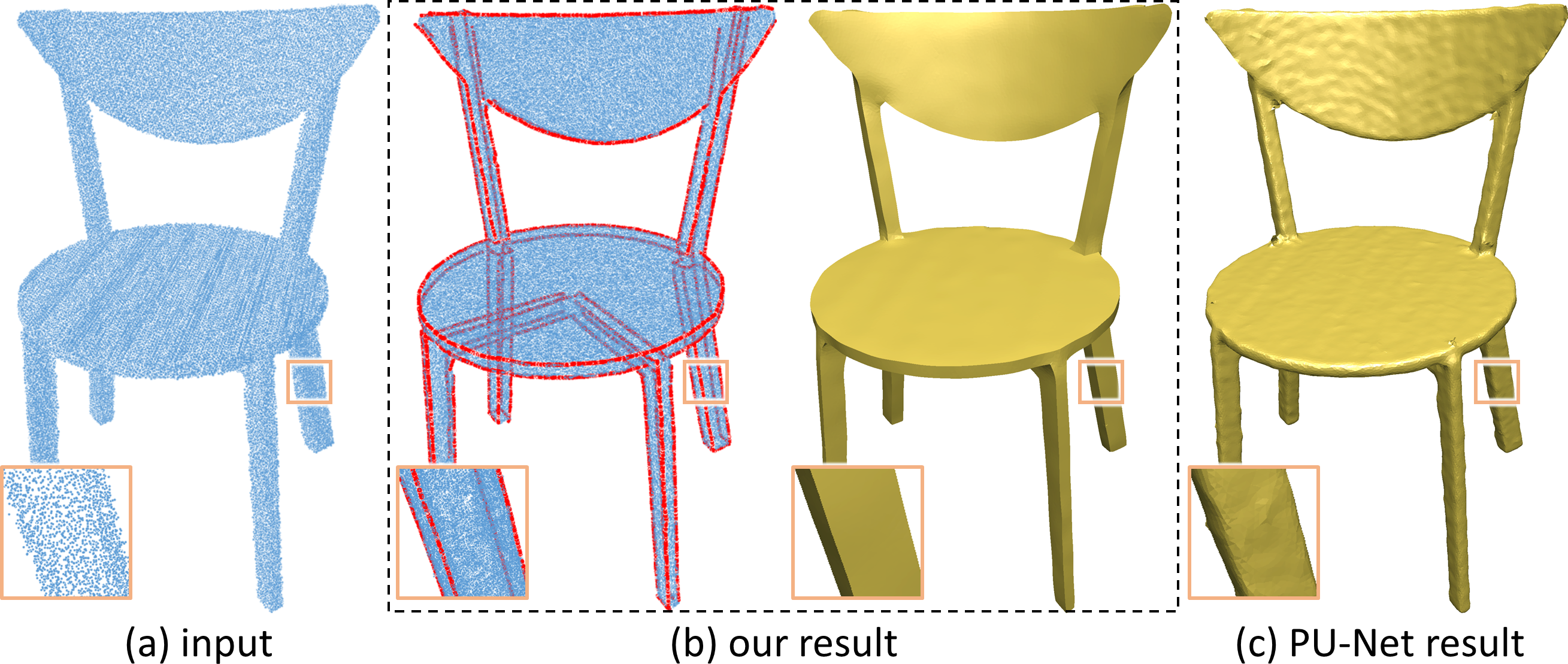

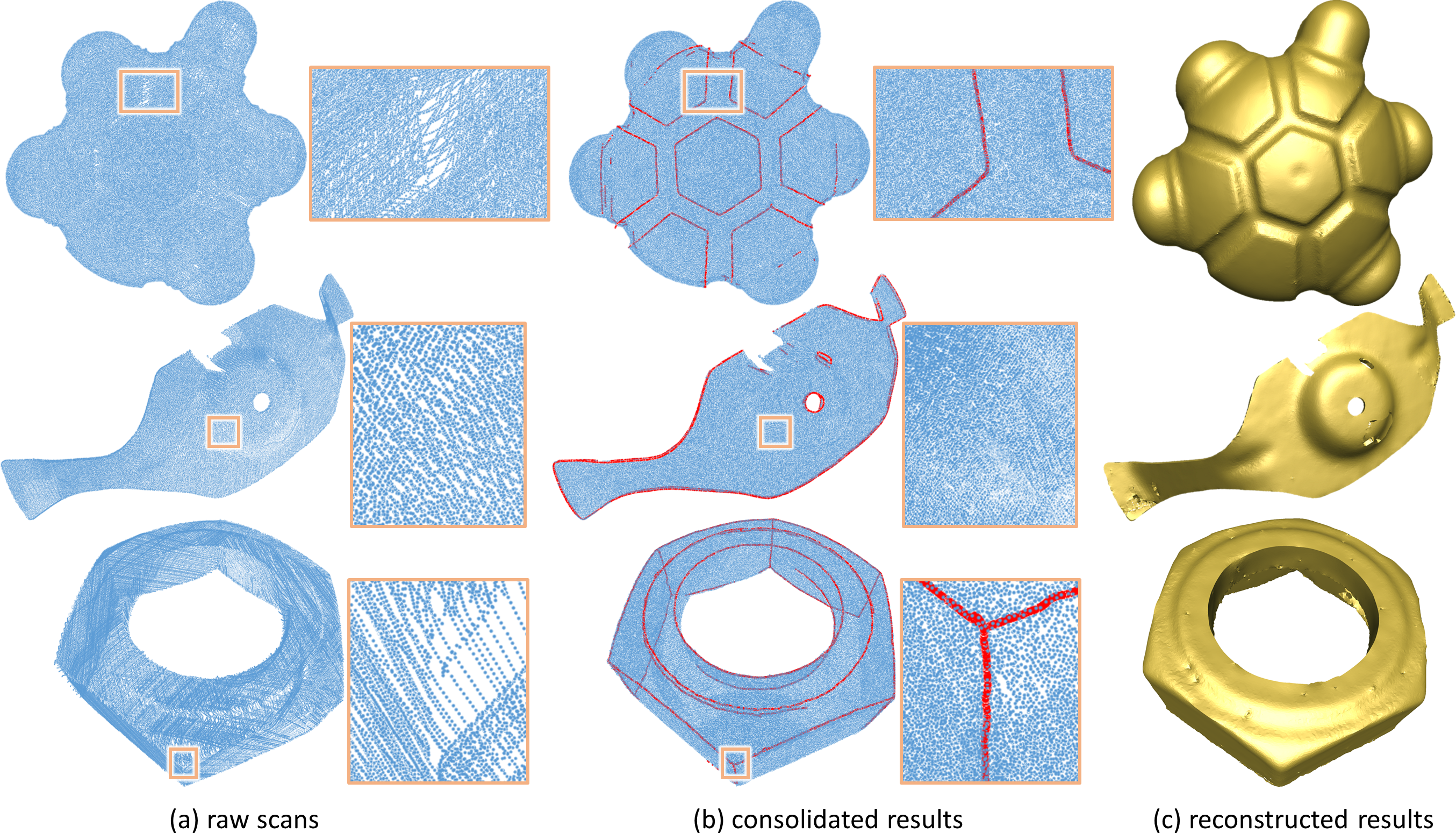

Pipeline of EC-Net |

|

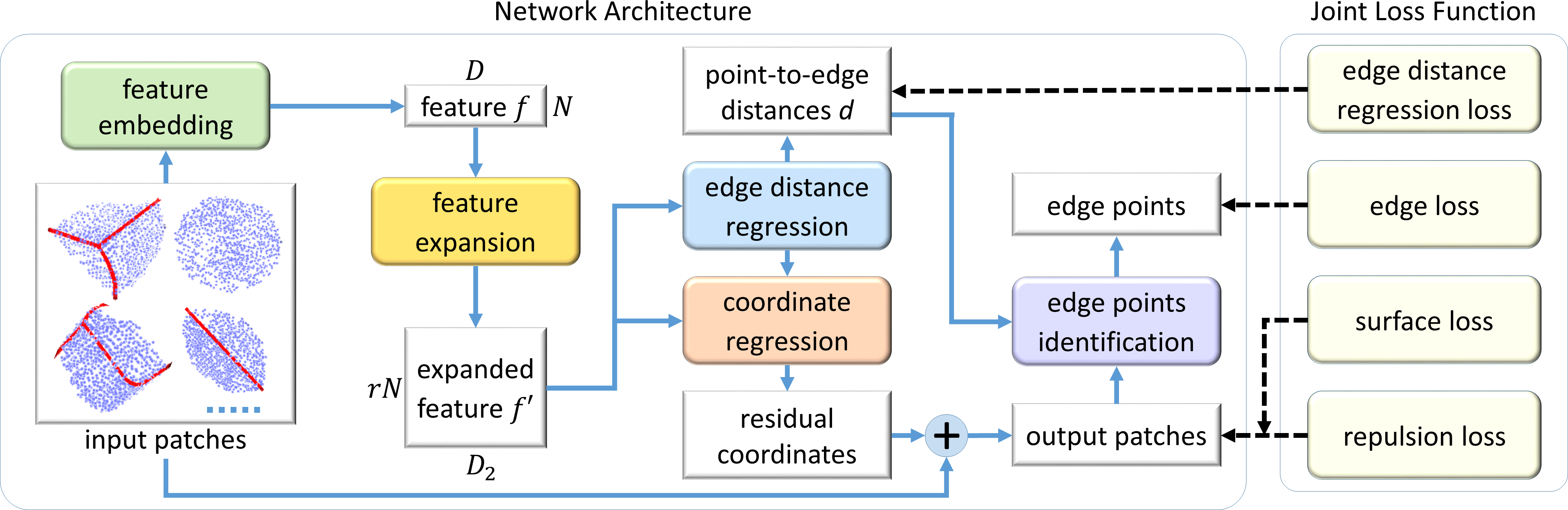

Patches Extraction |

|

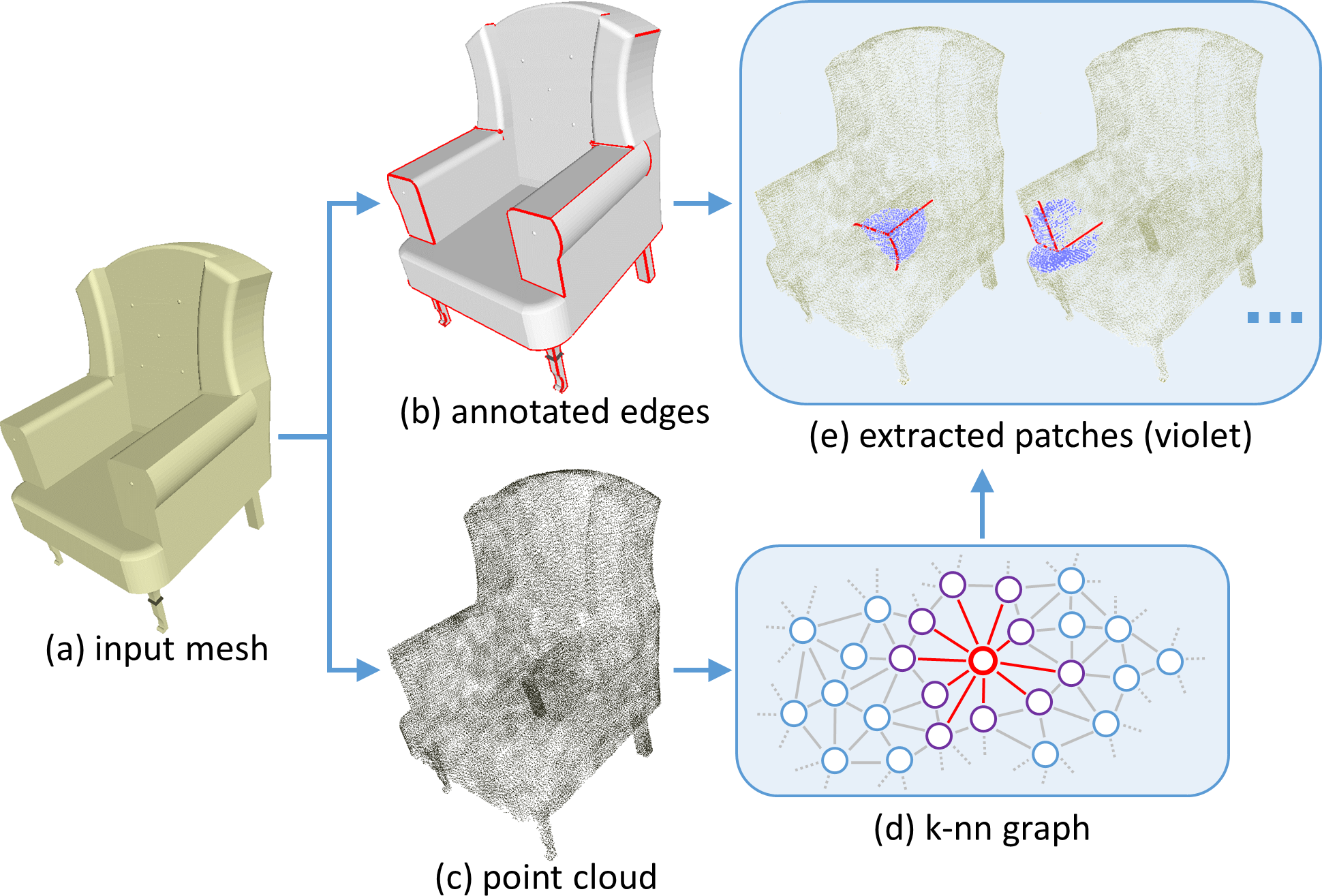

Lequan Yu, Xianzhi Li, Chi-Wing Fu, Daniel Cohen-Or, Pheng-Ann Heng. EC-Net: an Edge-aware Point set Consolidation Network. In ECCV, 2018. [Arxiv] [Paper] [supp] |

|

|

|

|

|

Acknowledgements |